Can we get a single neuron to memorize a spike train? Well... probably not. Maybe to a limited extent the neuron can adjust on the basis of alterations in its conductances, but mostly these adaptations are not specific enough for the resolution we want. Synaptic plasticity occurs on a time scale of milliseconds, and we're looking for something more in the microsecond range, or even nanoseconds. The time constants and resonances in a neuron can not be easily modified on a long term basis, however synaptic strength can be, and with a judicious combination of periodic behaviors we can synthesize just about any desired waveform. Therefore we should ask ourselves how populations of neurons can generate sequential behaviors. The rhythms in individual neurons will play an important role in this consideration.

Brain "waves" are detectable on the scalp, but there are many other rhythms in the brain that are not as easily detectable. Some of these arise from the combination of ion channels inside individual neurons, while others arise from the coupling of neurons in populations. An understanding of neuronal rhythms is important when studying brain function. There are many forms of processing and encoding/decoding that depend on activity "relative to" some rhythm. Examples abound, especially at low frequencies.

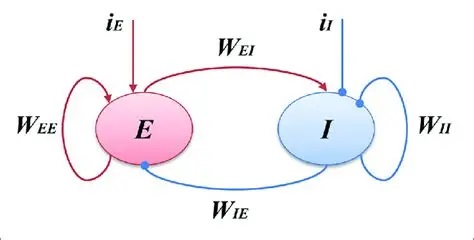

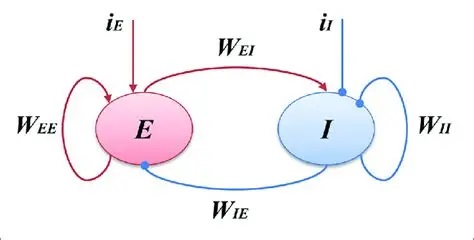

Types of Rhythmic BehaviorTo introduce population behavior, we can look at the early Wilson-Cowan model (Wilson and Cowan 1972) of neural populations, which describes the simplest form of population synchronization. To see rhythmic behavior in a population of omniconnected excitatory and inhibitory neurons, all one has to do is adjust the synaptic weights into the right range.

Populations E and I can be of any size. The parameters W are the synaptic weight matrices that interconnect them. The network is "omniconnected" in the sense that every neuron connects to every other neuron. The time course of a synaptic event is given by a time constant τ, and the simplifying assumptions are made that all weights within a projection are the same, and all time constants within a projection are the same. The resulting behavior is quite interesting. With no input i, the network may achieve a steady resting state, or it may oscillate wildly. The behavior depends on the synaptic weights and the relative numbers of neurons.

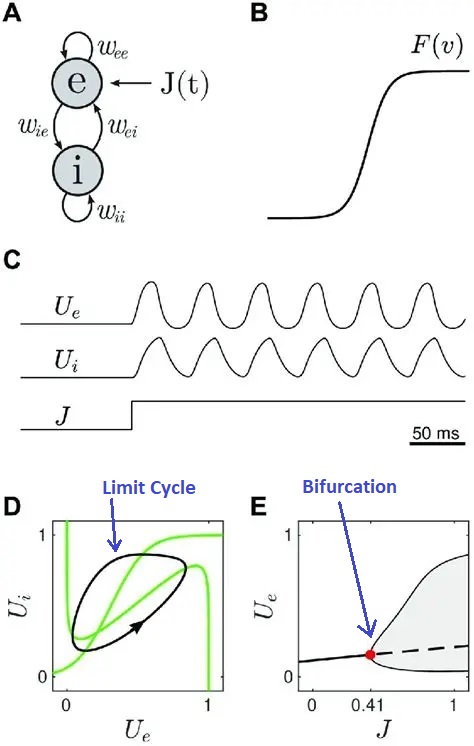

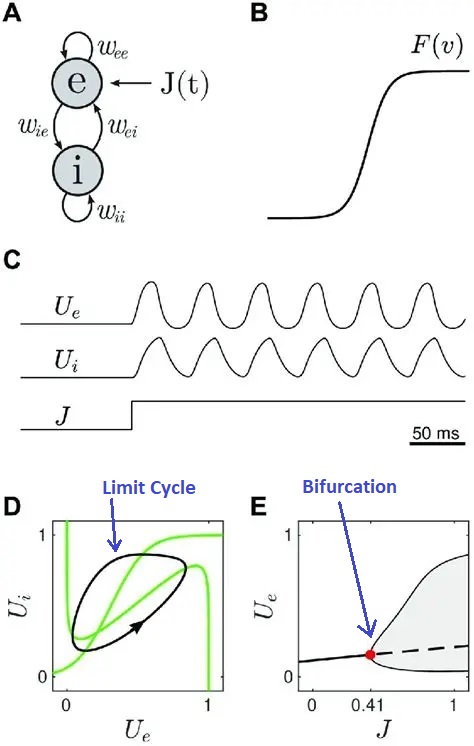

The behavior of the Wilson-Cowan model in phase space is shown in the diagram below. Whenever the trajectory enters the limit cycle orbit (the black line in part D of the figure), oscillatory behavior (brain "waves") will be observed in the population. This diagram shows an explicit input J that drives the excitatory population. In this case, the behavior depends on the strength of the input. At very low strength, the network may not oscillate and its response may be an exponential decay back to baseline. However a stronger input may cause the network to change state, shifting into an oscillatory output pattern. The next figure shows two different kinds of trajectories that can be observed in such a system, figure D shows a limit cycle and figure E shows a bifurcation. The bifurcation is important because it is the point at which the system changes state (changes "phase" as it were, although that word is now being used in a different context). The phase transition at a bifurcation point leading to a limit cycle is reminiscent of the action potential, but in this case entire population of neurons is being influenced by the dynamic.

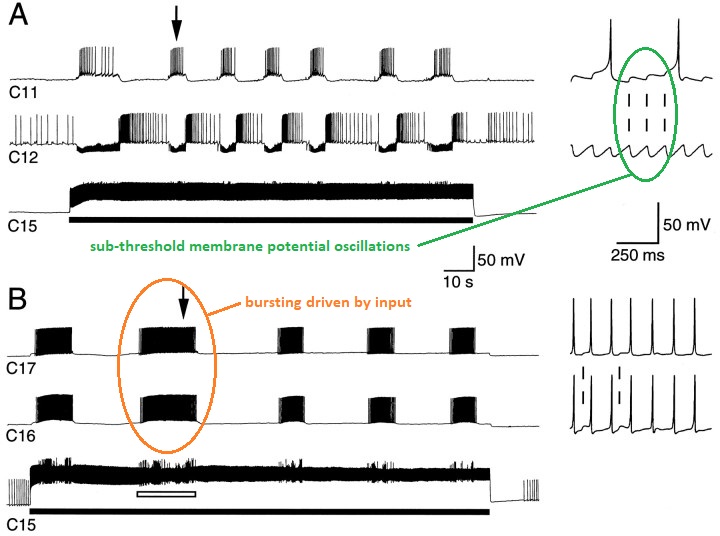

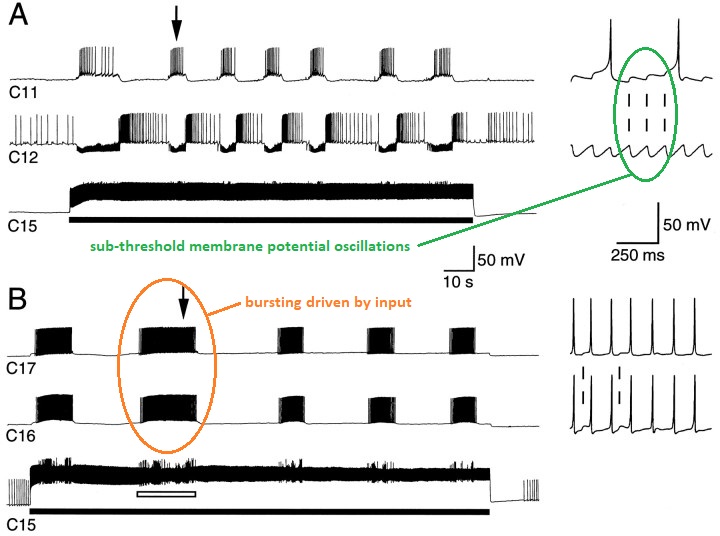

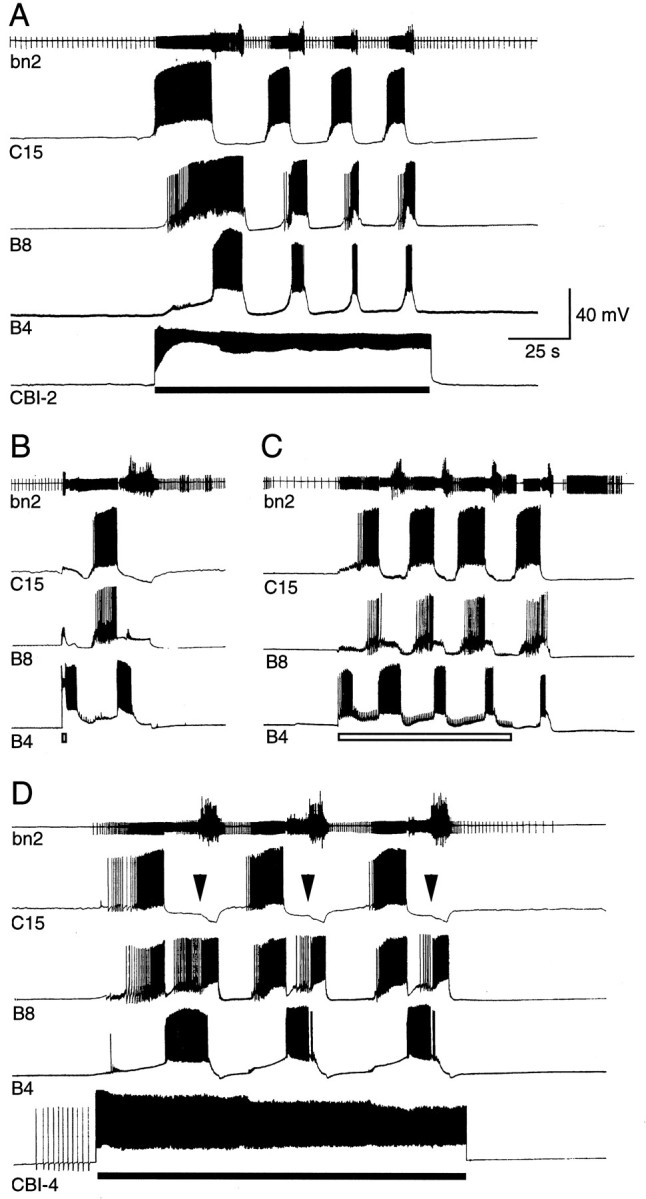

Central Pattern GeneratorsAn entirely different kind of rhythm can be generated inside individual neurons. Such endogenous rhythms typically involve voltage dependent calcium channels, although other arrangements are possible. The figure shows an endogenous bursting rhythm in a pacemaker neuron from a central pattern generator.

(figure from Perrins & Weiss 1996)

Many neurons have spontaneous activity, not all of it is regular. In the above diagram the sub-threshold oscillations are periodic, but there can also be an aperiodic stochastic "noisy" character associated with the membrane potential, which when it reaches threshold can result in an action potential.

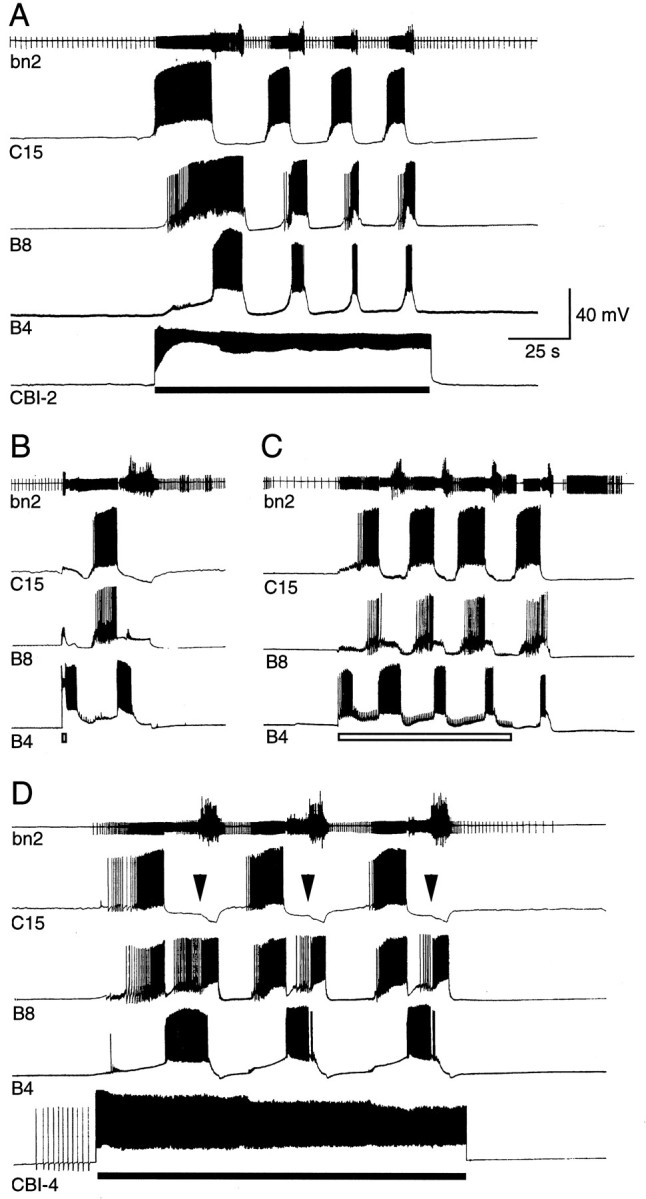

Rhythms from individual neurons can be combined with population activity. There are frequently brain nuclei consisting of populations of bursting neurons. The interaction of the various frequencies depends on the network connectivity. In the figure below one can clearly see the alternating activity in neighboring neurons, driven by the central pattern generator.

A common feature of many pattern generators is they have a subpopulation of inhibitory neurons that can turn them off. In many cases though, these inhibitory neurons do more than just turn off the bursting, at lower levels of inhibition they can pattern the spike trains that occur in a burst, as well as adjust the timing of bursts.

A neural oscillator can thus be built in a number of ways, depending on the need. In general a single neuron is unreliable, so populations of neurons usually handle rhythms that need to be reliable. A population organization makes it easier to control the oscillator, including its amplitude, frequency, and phase. Populations of neurons are also used to encode an important value. The encoding can take place at a number of levels. In some systems like the oculomotor integrator, encoding can take place on the basis of the variation in the slopes and intercepts of the current-frequency curves of the neurons. In other systems, encoding can occur on the basis of gradients, both in connectivity and in receptor and ion channel organization.

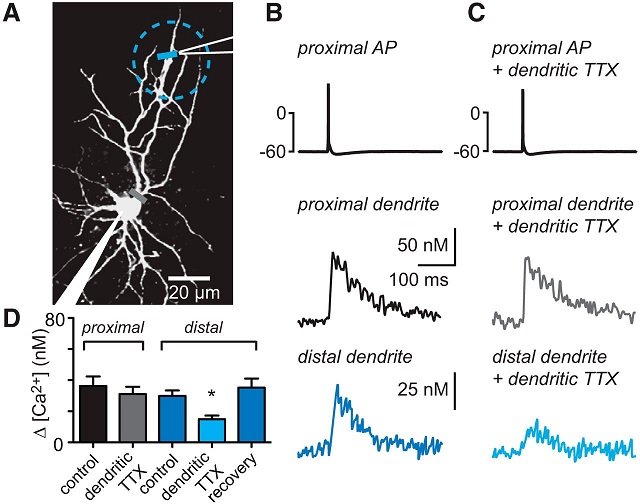

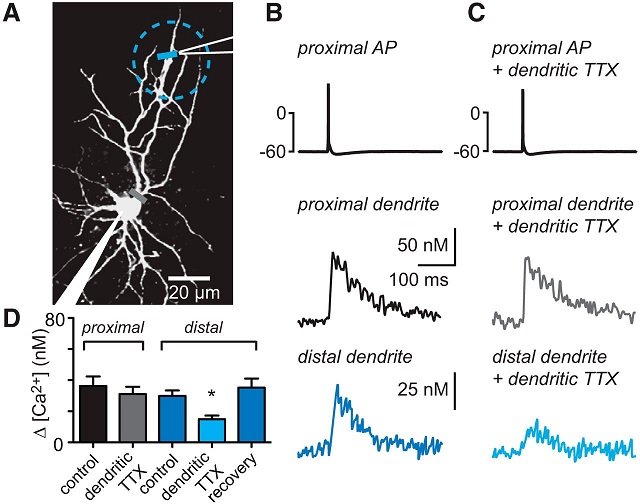

Distribution of Calcium ChannelsRiding on top of population rhythms, are the rhythmic behaviors of individual neurons. Typically (but not always), these are much faster than population oscillations. Endogenous rhythmicity is often related to voltage dependent calcium channels, and their distribution along the nerve membrane determines the areas of sensitivity and behavior. In the dendritic spines visualized earlier, calcium channels are sometimes distributed along the postsynaptic membrane and in the shafts as well, but not necessarily along the main dendrites. At other times the situation is reversed, and generally wherever the calcium channels are, is where we can start looking for rhythmic behavior. (There are other ways to achieve it, but that's a good place to start).

The figure below shows fast rhythmic potentials generated by voltage dependent calcium channels in the apical dendritic tree of a cortical pyramidal neuron. You can see the slow changes in membrane potential related to an EPSP, but you can also see fast rhythmic oscillations riding on it. These oscillations may occur at low or high frequencies depending on the underlying molecular kinetics. The fast oscillations might be 100 Hz or even higher. We will visit the issue of noise later, it is important to be able to distinguish fast oscillations from simple noise. Low-pass filtering a recorded signal at 40 Hz to get rid of unwanted noise is dangerous, because you won't see (or notice) any faster activity.

Synchronization of RhythmsThe concept of synchronization takes on several different meanings, depending on whether we're looking at population activity or activity intrinsic to neurons. In both cases, the rhythms can be "entrained" by external stimuli. This means the frequency and/or phase of internal rhythms aligns itself with the input. Such alignment is visible at many levels, in single neurons, in neural populations, and in the EEG.

Synchronization can be accomplished through inhibition. In the human brain inhibitory activity is most often associated with the neurotransmitters GABA and glycine. In the case of GABA there are two main types of receptor-linked behaviors. The GABA-A receptor is a direct ligand-gated ion channel, most often for chloride ions. This receptor is a drug target for epilepsy, insomnia, and anxiety, as well as anesthesia during surgery. GABA-B is a metabotropic receptor that exerts its effects through G-proteins, ultimately opening potassium channels and leading to hyperpolarization by efflux of K+ ions. GABA-B receptors can inhibit the voltage gated calcium channels associated with subthreshold membrane oscillations. This is a way of synchronizing the activity of nearby neurons. A simultaneous signal to multiple relay neurons from a GABA axon tends to synchronize the reactivation of calcium channels upon rebound, thereby synchronizing the bursting in the relay neurons. GABA-B receptors are also found presynaptically, on the axon terminals themselves. These types of receptors can play a role in presynaptic inhibition, and they can modulate the release of other neurotransmitters.

There are many forms of spiny GABA inhibitory interneurons, all over the human brain. Many of them have very obvious wiring patterns. Some of them encapsulate an axon hillock, or the base of an apical dendrite. One can therefore infer that the inhibitory cell must handle the conditions under which to suppress the target. The fact that there are spines suggest these neurons learn. There are also conditions under which an inhibitory GABA neuron can reset the phase of a population rhythm, in much the same way that an incoming IPSP can reset the phase of a subthreshold membrane oscillation in a single neuron. We will examine the issue of synchronization in increasing detail in the sections on coupled oscillators and criticality.

Bursting BehaviorWhen a neuron "fires", the action potential goes in both directions. It doesn't just go down the axon, it also invades the dendrites antidromically. The confluence of action potentials with (dendritic) synaptic activity is known to be one of the key factors in synaptic plasticity. ("Neurons that fire together, wire together"). Many successful neural network models are based on the covariance of input and output signals, and in fact the back-propagation method used in machine learning is merely a way of getting error information (which derives from covariance) back to the origin.

Electrical signals detectable on the scalp have two general requirements: the neurons have to be aligned in some kind of layered structure (otherwise the local field potentials cancel out), and they have to fire together at the same time to create a large detectable signal. Even under these conditions, averaging of hundreds of traces is typically needed to extract an evoked potential. There is high definition and high density ("HD") EEG, but the only way to get much better than ordinary EEG is to invade the brain, for example with electrocorticography (ECoG) on the surface of the brain or an actual implanted electrode. Bursting can not generally be detected in the EEG, although some high-frequency energy may be detectable.

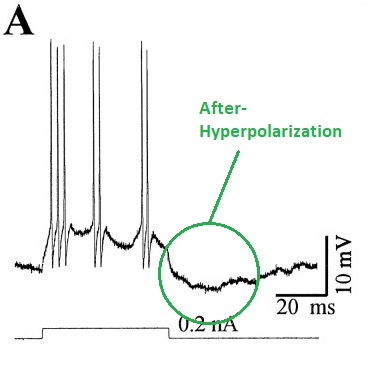

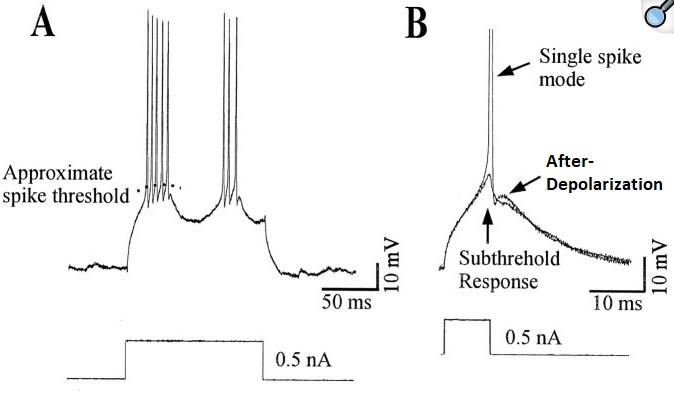

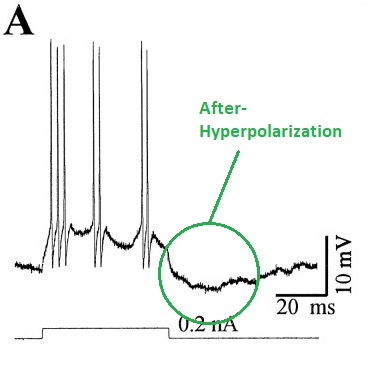

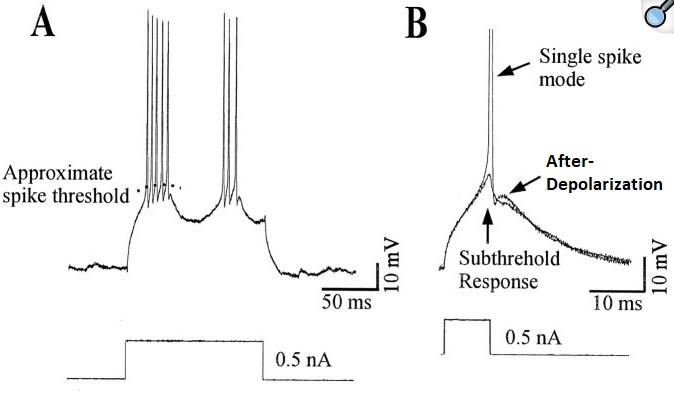

Generally two types of bursting behavior can be distinguished. There are individual neurons that burst intrinsically (they have a magic combination of ion channels that enables this behavior), and bursting can also occur as a result of network configuration, regardless of whether individual neurons burst in isolation. Neurons that burst by themselves, usually rely on various combinations of three ion channels: a voltage dependent inactivating calcium channel, a hyperpolarizing potassium channel, and a tonic sodium current. They typically exhibit characteristic after-potentials (either depolarizing or hyperpolarizing or both) that depend on the channel dynamics. Chloride conductances (often driven by GABA) can inhibit such bursts, or convert them to ordinary spike trains (see Li and Shew 2020).

In networks, bursting can occur in the entire population at once, or it can occur in small localized portions of a network layer. An input or a small change in synaptic strength can drive the network from stable, to metastable, and into unstable modes. For example in the Wilson-Cowan model shown above, giving the excitatory neurons STDP is sufficient to drive the network into periodic bursting. Bursting of the whole network all at once, is akin to an epileptic state, it's not likely to be computationally useful. However localized bursting can contribute to a computationally useful level of criticality, as we'll discuss on subsequent pages.

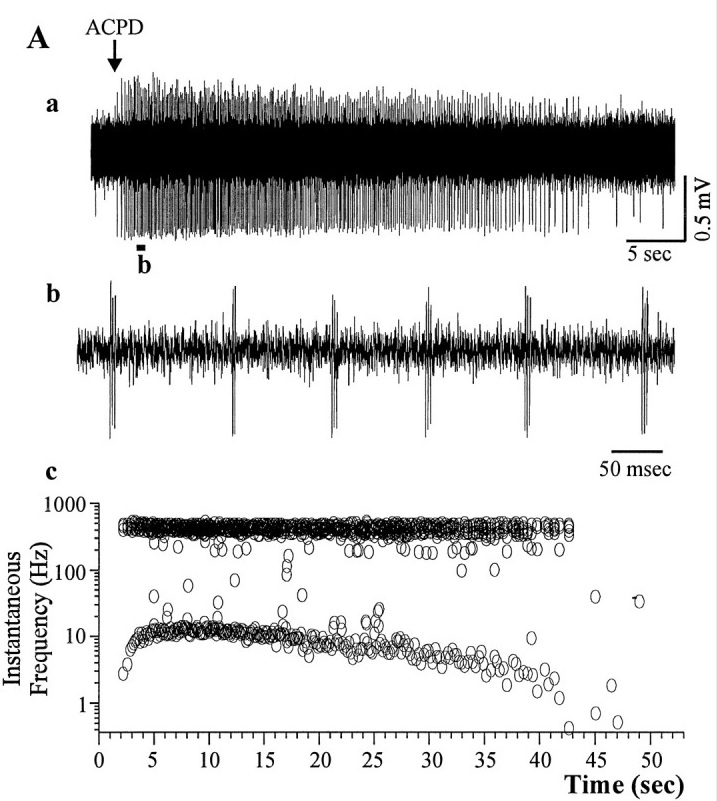

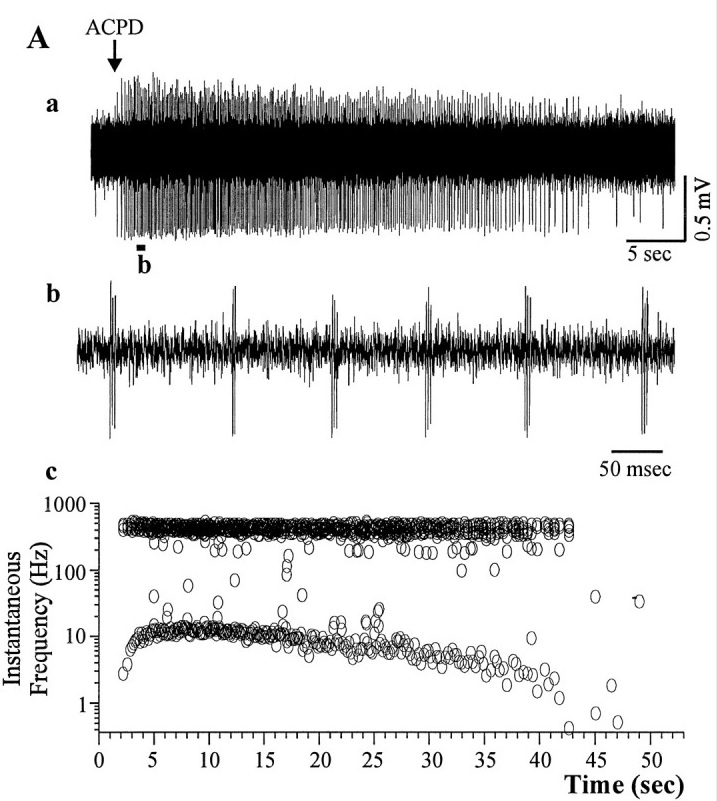

The figure shows bursting induced in a cortical neuron by a chemical agonist. From a recording like this, it is difficult to tell whether the bursts are due to the neuron or to the network (or both, and in what degree). Even if the agonist is synaptic, it may influence tonic transmission mechanisms that maintain network stability.

(figure from Brumberg et al 2000)

If we take the waveform in (b) and expand it, we can see individual action potentials, as well as the underlying membrane potential. Bursting can also be elicited electrically, by applying small intracellular current pulses. This particular neuron from the visual cortex, spikes at 71 Hz.

(figure from Brumberg et al 2000)

The same neuron can display multiple modes, for example a burst mode, a single-spike mode, and a quiescent mode. Multi-stability can occur in both the network and in individual neurons. In many cases these modes are interrelated and can control each other.

(figure from Brumberg et al 2000)

There are several dozen kinds of ion channels that can contribute to neural membrane behavior, and usually it is necessary (and desirable) to simplify. A reduction in model complexity can be achieved by reducing the number of channels to just the essentials (as in Wilson 1999), by making assumptions about the average behavior of the collection of channels, or by disregarding channels all together and simply modeling the membrane as combinations of nonlinear dynamics. Each of these compromises has a cost. In some cases the cost is bearable, in others not. This is an area where the judicious use of modeling tools can help us. A single-neuron simulator can often predict behavior related to population dynamics. Using such a simulator to explore synaptic behaviors is often time well spent.

Oscillations can be turned on or off at the individual neuron level, or the level of the population. There is plenty of evidence for both types of control at the synaptic level. For example in the frontal cortex, neurons utilizing BDNF (brain derived neurotropic factor) as a neurotransmitter exhibit delayed and periodic bursting in response to inputs and depolarizations. In a time sensitive network, one of the important parameters is "when" to fire the neuron. To some extent this is under the influence of subthreshold membrane oscillations, and "priming" behavior has been observed, where the output spike "waits" until the rising edge of the next subthreshold oscillation. The importance of effects like this depends heavily on the coupling of individual neurons to population behavior. Even if there is not direct ionic coupling between neurons, population rhythms and neuronal rhythms can entrain each other synaptically, and via astrocytes.

CoherenceJust because two neurons fire at the same time, doesn't mean they're connected ("correlation does not equal causality"). However the neuroscience literature is replete with interesting forms of cross-modulation, although the vocabulary is considerably different from that of radio engineers, and in many cases the words mean something specific, in addition to their usual physical meanings.

For example in the human nucleus accumbens there is "phase-amplitude coupling" between oscillatory signals at different frequencies (Durschmid et al 2013). Looking at the waveforms a radio engineer would conclude it's a simple form of amplitude modulation, but there's more to it than that. Proper coupling for neuroscience requires a synchronization between high and low frequencies at a point in time. The selected point is important, and it's coordinated with other brain activities. Phase matters, as we'll see in the section on coupled oscillators.

There are many forms of coherence in signal processing, and the one we're very interested in is phase coherence. This is when oscillators at the same frequency but in different parts of the brain, align their phases through mutual coupling. This behavior has been studied extensively but it's still unclear how exactly it relates to computations in neural networks. What has become clear, is that at some point the spatial topography gets encoded into periodic firing patterns, and this behavior depends on "phase encoding" which means alignment relative to another signal. We'll study phase encoding and the Kuramoto model of coupled oscillators when we discuss dynamics.

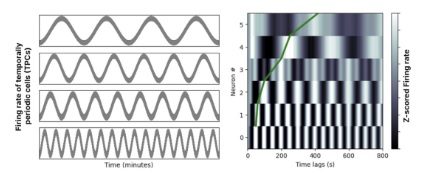

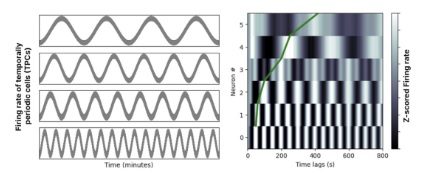

Sculpting Neuron BehaviorThere are three things to note in relation to sculpting spike trains. First, it's often done biologically. The genetic programming goes far beyond the shapes and time constants of ion channels. For example there are chemical gradients outside the cell, that cover broad areas of the brain's networks in functionally significant ways. An example we'll see several times on these pages, is the population of ramp cells in the entorhinal cortex. Much like the grid cells that cover space and enable the "place cells" in the hippocampus, the ramp cells cover time. There is a gradient of ramp frequencies from dorsal to ventral areas in the entorhinal cortex, and one may wonder whether that's due to differences in the ion channels, but it turns out it's related to a gradient of a marker molecule, that instructs the cell where it is in the geometry. This same principle is also found in the developing retina, and in many other parts of the brain. It affects "population" behavior, such as the slope of the ramps. The distribution of ramping frequencies is shown in the figure. Together they form a time-to-space map that can be used to locally tag the time of occurrence of events. When combined with phase encoding relative to a periodic signal (like theta), this mechanism can encode the local order of events.

(picture from Kreiman lab, Harvard University)

The second way spike trains can be sculpted is with actual conductances. We are informed in this area by the conductances related to bursting, which have been well studied. Among the important currents are an HCN conductance (a hyperpolarizing non-specific cation conductance that's usually associated with a cyclic nucleotide), a voltage dependent calcium conductance that usually inactivates on hyperpolarization, and a sodium conductance that may be either persistent or transient. However there are many more conductances that can affect the spike train, for example in thalamo-cortical relay neurons at least seven such conductances have been identified (Amarillo et al 2014).

The third way spike trains can be sculpted is, of course, with synaptic input. Generally speaking chemical synapses have time courses of one to several msec, and delays of around 1 msec, so a single synapse would be insufficient to program a spike train all by itself. However multiple synapses positioned at different points along the dendritic tree can do the job. There are many specializations in both the synapses and their positioning. In the case of dendritic spines, there is good reason to believe they exist for purpose of compartmentation, and indeed we find that the transmission through spiny synapses is often high amplitude and very reliable. With isolated synapses, the strength and time course of each synapse can be controlled individually. There are also cases where the opposite is desirable, and in these cases it is worth remembering that changes in the conductances (whether synaptically driven or not) alter the membrane time constant. In the Hodgkin-Huxley model this can occur either by altering the resistances (conductances) or the membrane capacitance. Sometimes combinations of time constants can endow the membrane with filtering properties and with resonances. (Dudman and Nolan 2009)

An interesting behavior is displayed by chloride ion conductances, which are often controlled by GABA, and have an equibrium potential just slightly above the resting potential. When the chloride equilibrium potential is positioned between the resting potential and the threshold, the effect of GABA will be inhibitory below the equilibrium potential, and excitatory above it. Besides serving as a crude form of multiplication, this effect can become important in learning that involves spike-timing dependent plasticity (STDP). In a timing-sensitive network like the hippocampus, STDP and other forms of learning need to be tightly regulated, because they need to occur in synchrony with both sensory input from the surrounding cortex, and contextual information from the frontal lobes.

For purposes of modeling and research, there are dozens of ionic conductance types available in simulation engines like NEURON and Nest, and you can roll your own with Brian2. The conductances may include persistent and non-persistent, inactivating and non-inactivating, inward- and outward-rectifying, specific and non-specific, and so on. In many cases there are non-obvious interactions with ions other than those passing through the channel, for example cerebral NMDA receptors are blocked by extracellular magnesium, which must be dissociated before calcium can pass.

This all gets very complicated. To inform a machine architecture from a neuroscience standpoint, maybe we don't need to be so specific. Many times a qualitative model is sufficient to demonstrate a behavior, or prove a point. Let's look at some ways we can simplify things, by considering some model neurons and synapses that distill essential behaviors.

Next: Model Neurons |