Why do we care about a timeline? Isn't the firing of neurons enough? Well... brain electrical activity is topological, it has a lot to do with geometry, and embeddings. To really understand, we have to journey through the world of neurons, and take a look at a couple of examples of real-life brain wiring, and then come back and revisit the concept of the timeline after a bit of study. Meanwhile though, we can set the stage with a geometric example, one of the many ways in which topology becomes vitally important in the study of brain function.

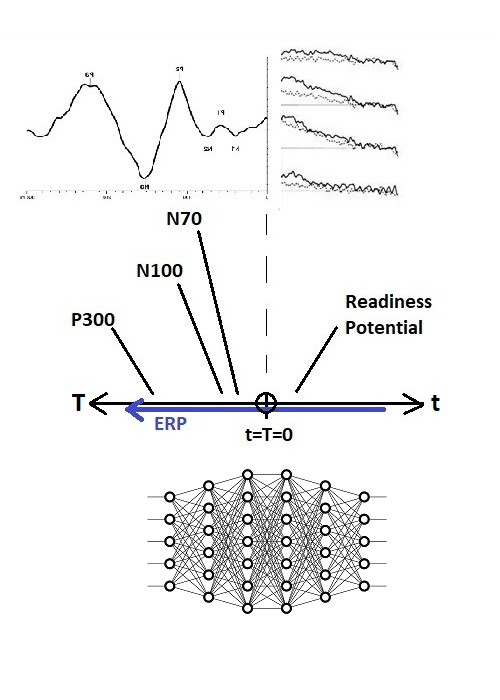

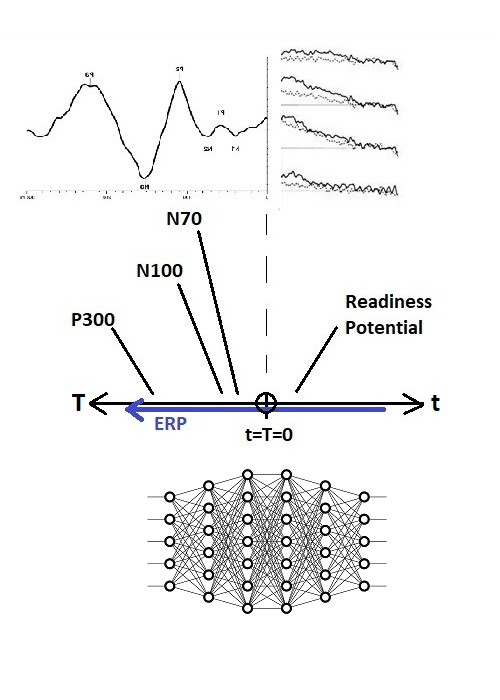

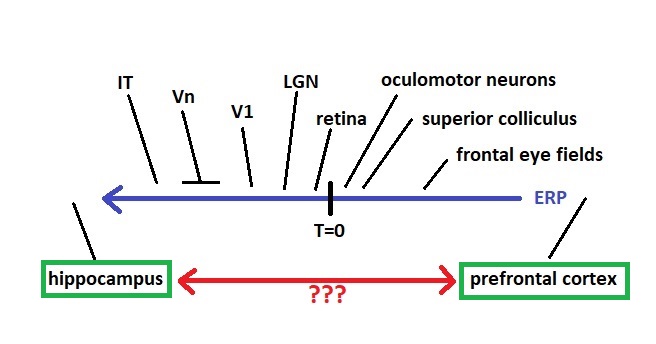

The brain’s timeline is similar to a sequential series of neural network layers in a machine learning model. For example if we consider the visual system, we can trace the electrical activity from the retina through V1 and then V4, all the way into the hippocampus, and we can map these processing stages along the timeline. The N100 visual evoked potential, for instance, occurs at T = -100 msec. We can do the same thing for the auditory evoked response, premotor potentials, and in general any electrical activity linking two brain regions. Note that this mapping is discrete, successive points in a sensory or motor domain are mapped to relatively well defined intervals along the timeline. And keep in mind that activity is mapped relative to an egocentric reference frame.

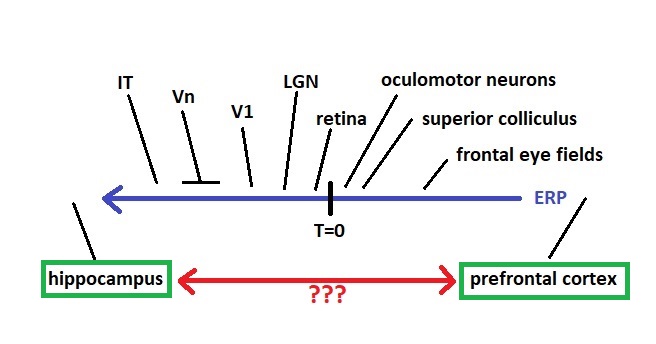

Here is the same map shown a different way, with stages of processing for a voluntary eye movement mapped to a timeline. There are two relevant features, one is that an observer network, connected in such a way that it can see the entire timeline, has access to the entire time course of the voluntary movement including its sensory consequences. (In other words, if the timeline were a retina, the patterns of moving information would be just like a visual image moving across the visual field). And second, we can map brain structures to the timeline, if we know which of them generate the measured signals.

It turns out, that the areas at the ends labeled "hippocampus" and "prefrontal cortex", have their own very special way of encoding time. We'll take a close look at it on these pages. First though, we need to understand (whether and) how these areas relate to the timeline. For example, the hippocampus gets input from just about the entire extent of the sensory timeline (especially along T < 0), and a considerable portion of the motor timeline as well. How are the signal delays organized into a unified sensory experience? In visual perception, the spatial frequency information from V1 gets to the hippocampus before the color information from V4 or the motion information from MT. Experiments from psychophysics corroborate this, different aspects of visual perception arrive at different times. How are these various topographic maps aligned, in both space and time?

If you're already somewhat familiar with the hippocampus and its surrounding tissues, note that the timeline mapping of event related electrical activity is completely independent of the synthetic reconstruction that occurs in the time cells in the entorhinal cortex. If we're interested in "when" something happened in relation to some other event, the timeline has a finer scale of resolution than the scene map. Scene mapping aligns with the movements and comings and goings of objects in the environment, whereas the timeline as shown here relates to internal brain function. The timeline is topographically mapped into other brain areas, as are many structures in the brain that connect to the entire cortex as well as subcortical areas, for example the insular cortex and the claustrum come to mind. There are also areas like the intralaminar nuclei of the thalamus that seem to contain an equivalent topographic whole-brain mapping at the level of the thalamic systems.

Are we really interested in tracing the path of an action potential through the neural network? Well... no, not really. However we need a way to conceptualize the embedding of brain time into real time. And we can already do interesting things with it, as we'll see momentarily. The pedal hits the metal in the limit as dt => 0, where information affects physical processes and vice versa.

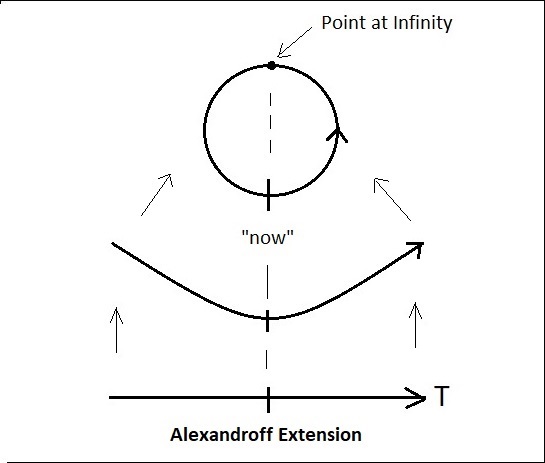

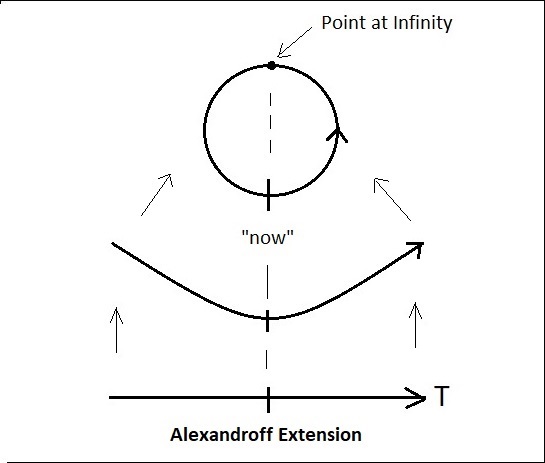

CompactificationObviously, sensory events can determine motor actions, and therefore the timeline is not just an interval or a line segment, and things do not simply “drop off the left end of the timeline”, nor do they magically appear on the right. Instead, the brain uses sensory information to guide the next motor action, meaning that there must be a path from the far left of the timeline, to the far right. Given that the purpose of the brain is to optimize the behavior of the organism "in real time", this path should be as fast as possible. (In other words, reciprocal feedback connections between adjacent layers are probably too slow). To represent the influence of sensory events on motor actions we must create such a path, we must perform a thought experiment and compactify the timeline.

For those with a machine learning background, we'll state explicitly up front that the word "compactification" is used here in the topological sense, meaning to "make compact". It has nothing to do with dimensionality reduction (which we'll address separately on later pages).

The simplest form of compactification is the Alexandroff One-Point Compactification, which equates with taking the two ends of the timeline and tying them together. Mathematically this involves adding a point at infinity. We insert this point “between” the ends of the timeline. To compactify this way, we lay out the timeline linearly like a piece of string, then take the two ends and bend them upward (or downward) so they meet. Then we insert the point at infinity between the ends, and glue the whole thing together. (We need the point at infinity because the two ends of the timeline are different, we can’t simply equate them). From a neural network standpoint, the point at infinity becomes, in effect, a "hidden unit".

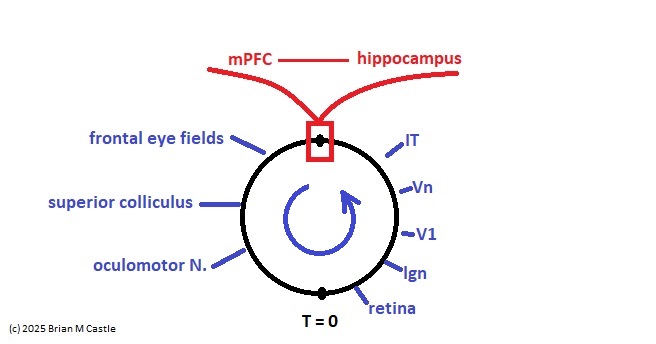

Tracing the orientation of information flow with respect to T and voluntary motor signals as shown earlier, mapping time has the same orientation along the loop as it does along the linear timeline. In the figure above, T goes counterclockwise and voluntary motor information goes clockwise, so we have "flipped" the timeline orientation relative to the diagrams on the previous page, by modifying f in the function T = f(t). In the diagram above, the sensory portion is on the right and the motor portion is on the left. One should become accustomed to such geometric manipulations.

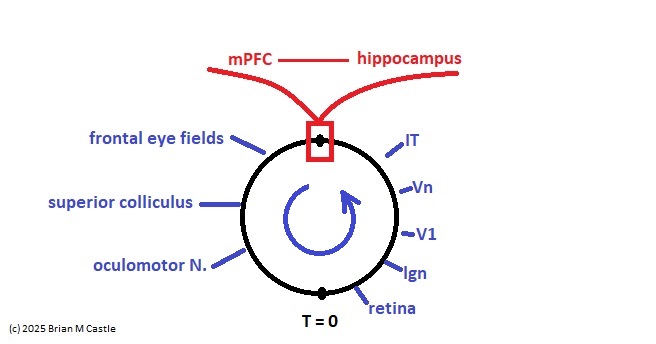

We get an illuminating view if we compactify one of the earlier figures showing the mapping of brain areas onto the timeline.

This architecture is reminiscent of the Nobel prize-winning Boltzmann machine, which looks very much like a compactified network (they don't call it that, but it's one of the natural geometric arrangements). In the Boltzmann machine, the locations of the hidden units are arbitrary, because it has no geometric relationship to a timeline. However it could be mapped in such a way that one of the neurons represents a specific point in time. In the diagram below, h is hidden and v is visible. The visible units can be mapped onto a timeline.

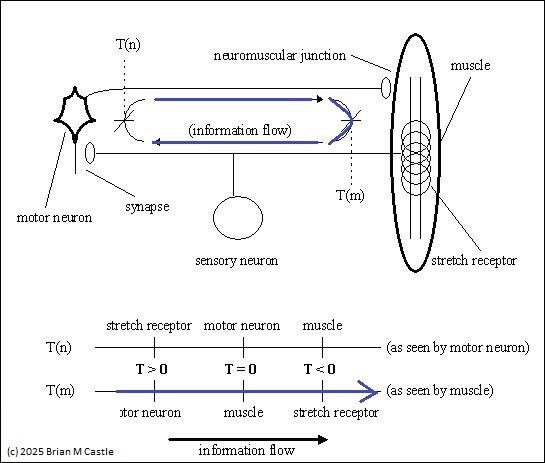

The Boltzmann machine is much more flexible than shown, we'll return to it later. The point is that the compactification confers a particular geometry to it. The result of compactification is a “projection mapping” of the original time-to-space map, relative to the designated origin. The line segment has become a circle, and one dimension has become two. And now we can do something we couldn't do before, which is rotate the circle. This makes sense from the standpoint of brain architecture, because most of our brain wiring is organized on the basis of “loops” in the connection stream. This is evident even at the most peripheral level, in the construction of neuromuscular reflexes.

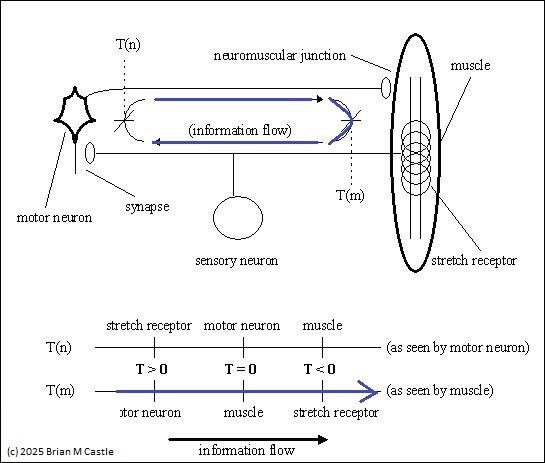

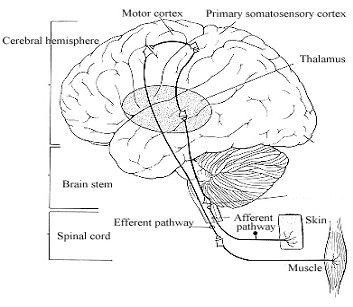

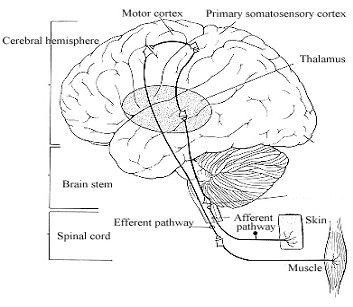

And of course, it is equally evident at a higher level. The figure above shows a short monosynaptic reflex arc in the periphery. Blue indicates the information flow associated with a traditional ERP measurement. Below is the same concept at a more central level, with a more extensive loop. We can build a "loop topology" from such circuits, and if there are unidirectional connections in the network, we can use generalized bidirectional loops for modeling purposes and assign signal strengths of 0 to the unused connections.

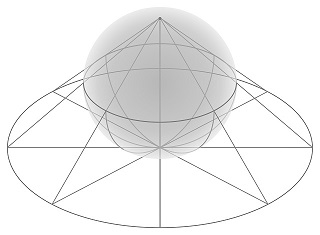

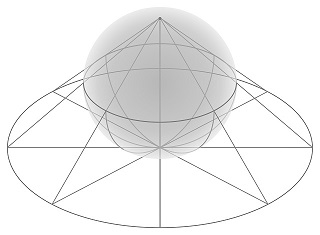

Projection mappings can occur in more than one dimension simultaneously. For example the figure shows a stereographic projection in two dimensions. Such a mapping would tend to magnify the "foveal region" nearest T=0. High dimensional scenarios are commonplace in machine learning, where data sets often require thousands of axes in Bayesian space. Such situations are relevant to the real-time extraction of micro-causality, as shown on the next page, and dozens of other tasks that need to be performed by real brains. The mapping of probabilities to non-Euclidean manifolds is another important aspect of information geometry.

(figure by Mark.Howison CC BY-SA 4.0)

Where Is The Origin?In reflex arcs such as those shown above, one can ask oneself, “where is the origin”. The electrical activity we predict is always relative to where we measure it. If we put the electrode in the muscle, then that point is “now”, it’s T=0. But we could just as easily put the electrode in the motor neuron, and then the muscle activity would be measured as occurring slightly “later than” the measured event. Translating the origin is equivalent to rotating the compactified circle.

This is a little weird, from the standpoint of physics. Now is always "now", isn't it? Well... yes and no. "Now" is what we see when we look at the organism. But inside the organism, everything that happens "now" was either previously staged, or will be analyzed in the future. An engineer looking at such a system might be initially discouraged, because there is enormous complexity in teasing apart the components. However, there are unifying principles in brain design, and they all align with physics. The takeaway from a neuroscience standpoint is that "now" is not just a point in time, it's actually a "window", and we can map points in physical time to behaviors within the window, in much the same way that the likelihood of a photon presenting with a particular position or momentum is determined probabilistically using the Schrodinger equation.

Why is sensation "in" the periphery rather than "in" the brain? Only the muscles and some primitive receptors are in the periphery, all the complicated computational apparatus is in the brain. So why do sensations seems to be "out there" rather than "in here"? As far as our experience is concerned, short reflexes and long reflexes are experienced at the same time (although careful study reveals that this is not exactly true from a physiological standpoint, only from an experiential standpoint). So why are short and long information flows experienced simultaneously?

The location of a point of experience relative to an information loop can be called its "anchor". In most cases we define the anchor to be coincident with the environmental interface. If we're talking about peripheral receptors and muscles the anchor would likely be there, rather than in the central nervous system, however if we're talking about a timeline that's being innervated by an embedding network then the anchors would correspond with neural activity along the timeline. Generally speaking the anchor corresponds with the intersection of the compactified loops in the "earring topology" shown on the next page.

Consider, that the brain can never know what's happening "exactly" now, because there are always conduction delays along the neural pathways. There is a singularity around the information flow through T=0. And the purpose of the brain (as stated) is to optimize the activity exactly within this singularity. It seems like a tall order, doesn't it? The obvious answer is that the brain requires a model of T=0, and since it can never fully know the ground truth, the best it can do is optimize the model to account for the data. In our brains, this optimization occurs "very quickly", its individual bits can be tweaked at rates as high as 1000 gHz, as we'll see shortly. This is one of the many ways the extent of the singularity can be minimized.

Students of neuroscience will realize that in tying together the two ends of the timeline, we're bringing the hippocampus and prefrontal cortex nearer to each other, in terms of signaling delays and update times. Essentially, they form an important part of the "point at infinity" we created. In compactified form, ERP's will travel "through" the point at infinity. And it is at this point where memory comes into play, especially when we're interested in the relationship between sensory input and voluntary motor activity. The involvement of the hippocampus and prefrontal cortex in short term memory and scene mapping is entirely logical, and from a systems architecture standpoint, even necessary.

Let's talk about another interesting aspect of the compactified topology, which is its self similarity. And we'll keep in mind while doing so, that the timeline is an abstraction that applies at any level of resolution. The forward portion of the information flow depends on prediction, more than it does on voluntary initiation. The voluntary initation pattern is a "meta-pattern" in the network, there are other patterns like simple sensory patterns that only start at T=0 and move leftward, and voluntary motor actions that are intended but never executed.

Next: Fractal Structure

|